|

8/30/2023 0 Comments Permutation test

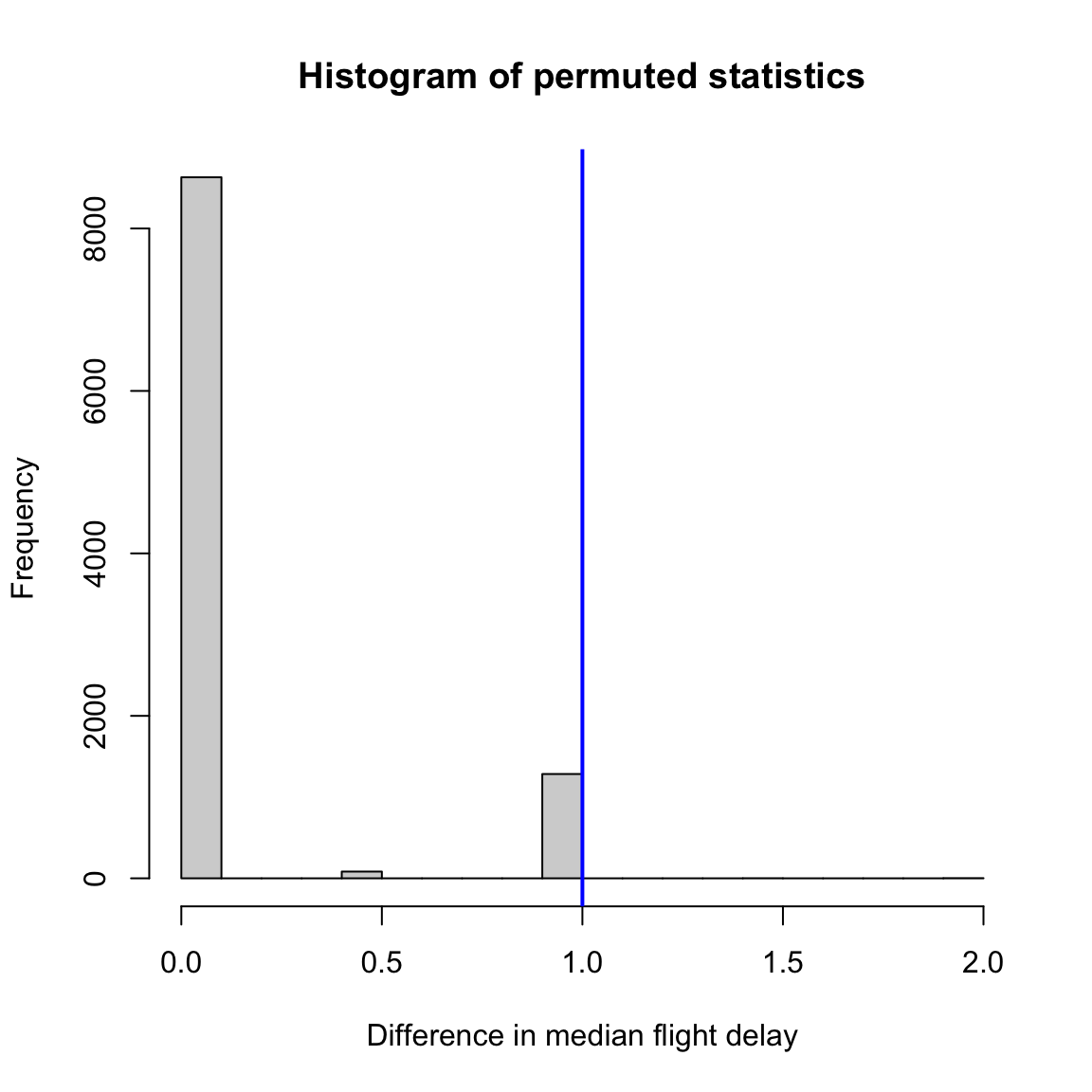

How reliable are the parameters estimated from this sample? Would we observe the same parameter if we ran the model on a different independent sample? Bootstrapping offers a way to empirically estimate the precision of the estimated parameter by resampling with replacement from our sample distribution and estimating the parameters with each new subsample. In statistics, we are typically trying to make inferences about the parameters of a population based on a limited number of randomly drawn samples. We will also not be covering cross-validation as this is discussed in the multivariate prediction tutorial. We will not be covering the jackknife in this tutorial, but encourage the interested reader to review the wikipedia page for more information. We tend to prefer the bootstrap procedure over the Jackknife, but there are specific use cases where you will want to use the jackknife. Jackknifing and bootstrapping are both used to calculate the variability of an estimator and often provide numerically similar results. In this tutorial, we will focus on the bootstrap and permutation test. Jackknife allows us to estimate the bias and standard error of an estimator by creating samples that drop one or more samples.Ĭross-validation provides a method to provide an unbiased estimate of the out-of-sample predictive accuracy of a model by dividing the data into separate training and test samples, where each data serves as both training and test for different models. Permutation test allows us to perform null-hypothesis testing by empirically computing the proportion of times a test statistic exceeds a permuted null distribution. There are 4 main types of resampling statistics:īootstrap allows us to calculate the precision of an estimator by resampling with replacement Our lab relies heavily on resampling statistics and they are amenable to most types of modeling applications such as fitting abstract computational models, multivariate predictive models, and hypothesis testing. Though these concepts will seem a little foreign at first, I personally find them to be more intuitive than the classical statistical approaches, which are based on theoretical distributions. However, in applied data analysis these assumptions are rarely met as we typically have small sample sizes from non-normal distributions. Most the statistics you have learned in introductory statistics are based on parametric statistics and assume a normal distribution.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed